Every LLM you work with today is a Transformer. Understanding the architecture does not mean implementing it. It means knowing why context placement affects your prompts, why RAG needs two separate model calls, and why bigger models know more facts. This post breaks down nine core concepts – self-attention, Q/K/V, multi-head attention, FFN, layer norm, residual connections, positional encoding, and encoder vs decoder – using plain English analogies and visual diagrams. No math, no ML history, no prior knowledge assumed.

- GenAI

Architecture Explained: 9 Essential Concepts for AI Engineers

- Prabhat Kashyap

The transformer architecture is the foundation of every LLM you use today. GPT-4, Claude, Gemini, Llama. All of them

When you write a system prompt, chunk a document for RAG, or wonder why your model loses context beyond 128k tokens, the Transformer architecture is what is happening under the hood. You do not need to build one. But understanding how it works changes how you use it.

This post covers nine core concepts with plain English analogies, visual diagrams, and direct callouts for how each one affects your day-to-day work. No math. No history. No comparisons to older models.

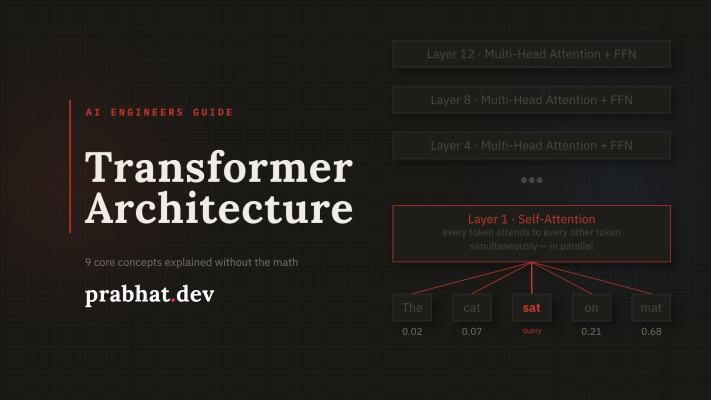

1. Self-Attention: Everyone Talks to Everyone at Once

This is the most important idea in the whole architecture. When the model reads a sentence, every word looks at every other word simultaneously. Not one at a time. Not left to right. All at once, in parallel.

Think of a meeting room where every person can pass a sticky note to every other person at the exact same instant. Each note asks: “How relevant are you to what I am trying to say?” Every person reads all the replies and decides who to pay attention to. That is self-attention.

In practice, the word “bank” in “I deposited money at the bank” pays very high attention to “deposited” and “money”, and almost nothing to “I” or “at”. The model figures this out entirely by itself during training. You never told it what “bank” means.

What this means in practice: every token you add to a prompt shifts how the model weighs everything else. A vague instruction early in a long prompt can get drowned out by more specific text near the actual query. This is why context placement matters when you are writing prompts.

2. Query, Key, Value: The Search Engine Inside Every Token

The attention mechanism runs every token through three different lenses at the same time. These are called Q (Query), K (Key), and V (Value). They are learned matrices the model develops during training.

I find the library analogy the clearest way to think about this. Your Query is what you type into the search bar: “books about financial institutions.” The Key is the index tag on each book’s spine: “Banking, Finance, Rivers.” The model matches your query against all keys to find the best fit. Then it opens the book and reads. That content is the Value.

Q, K, and V are all learned during training. The model teaches itself which questions to ask, how to label tokens, and what information to extract. You do not define any of this. It figures it out from billions of examples of human text.

3. Attention Score: Deciding Who Gets Heard

Once every token has a Q and every other token has a K, the model computes a score for every pair. It is basically asking: how much should token A pay attention to token B?

Think of it like a compatibility score. Every word scores every other word based on how well their Q and K match. After all scores are computed, a softmax step converts the raw numbers into percentages that sum to 1.0. The result looks like: “spend 60% of your attention on ‘money’, 30% on ‘deposited’, 10% on the rest.”

There is also a scaling step where the score is divided by the square root of the dimension size. Without this, scores can get so large that softmax becomes extremely spiky and essentially ignores everything except the single top match. Scaling keeps the distribution healthy.

// Conceptual flow, for each token

raw_score = dot_product(Q_token, K_all_others) // Q·K

scaled = raw_score / sqrt(dimension_size) // scale

weights = softmax(scaled) // sums to 1.0

output = weighted_sum(weights × V_all_others) // final blend4. Multi-Head Attention: Eight Experts Reading the Same Sentence

One round of attention can only capture one type of relationship at a time. Multi-head attention solves this by running the entire Q/K/V process H times in parallel. Each head learns a completely different relationship between tokens.

Picture giving one article to eight editors at the same time. Editor 1 looks for grammatical structure. Editor 2 tracks who the subject of each sentence is. Editor 3 watches for negations and conditionals. Editor 4 spots named entities. Each editor works independently and produces their own notes. Then all the notes get combined into one final verdict. That is multi-head attention. Each head has its own separate Q/K/V matrices, learns different patterns, and the outputs are concatenated and projected back into a single vector at the end.

This is why LLMs handle ambiguous, multi-part instructions better than you might expect. When you write “summarise this document in a professional tone focusing on risks”, separate heads track the task, the style constraint, and the content filter in parallel. They do not compete. Their outputs get combined.

5. Feed-Forward Network: Where the Model’s Memory Lives

After attention figures out which tokens to focus on, each token passes through a feed-forward network (FFN). It is a small two-layer neural network applied independently to every token position.

Here is the insight most people miss. The attention layer is about relationships: token A relates to token B. The FFN layer is about facts: what does token A, in this context, actually know?

Attention tells the model: “the word ‘Python’ in this sentence is about programming, not snakes” because nearby tokens like “function” and “import” had high attention weight. The FFN then fires a set of neurons that say: “Python means programming language, created by Guido van Rossum, dynamically typed, widely used in AI.” The FFN is the model’s knowledge base. This is why bigger models with more FFN parameters know more facts.

There is research showing you can surgically edit a model’s factual knowledge by updating specific FFN weights without retraining the whole model. This is the basis of model editing and knowledge patching techniques. The facts really are localised there.

6. Layer Normalisation: Keeping the Signal From Exploding

Every token is a long list of numbers called its embedding vector. After each sub-layer (attention or FFN), those numbers can drift badly. Some become very large, others shrink toward zero. Layer normalisation rescales them back to a stable, consistent range before the next sub-layer runs.

Think of mixing eight instruments in a studio. If one guitar suddenly gets 10x louder, it dominates the whole track and everything else becomes inaudible. Layer Norm is the compressor pedal that keeps every signal in a reasonable range so all voices stay audible. Without it, training collapses. Gradients either explode or vanish, and the model never converges.

Modern LLMs use Pre-Layer Norm, which means normalisation happens before each sub-layer rather than after. This stabilises training at large scale and is one of the reasons 70B+ models train reliably without exploding gradients.

7. Residual Connections: The Shortcut That Makes Deep Networks Work

A Transformer can have 96, 128, or more layers stacked on each other. Without residual connections, training that deep is nearly impossible. The gradient signal degrades as it flows backwards through all those layers and lower layers simply stop learning.

Imagine driving through 96 city intersections, one per layer. Every traffic light costs you momentum and time. A residual connection is a highway bypass that lets the original signal skip directly to later layers, while the normal processing route still runs in parallel. Gradients flow backward through the highway unimpeded, so even layer 80 gets a clear training signal. This is also why early layers can specialise in basic syntax while upper layers build higher-level semantics. The residual stream carries a progressively refined representation all the way through.

// What happens at EVERY sub-layer, both attention and FFN

output = LayerNorm( x + SubLayer(x) )

// ^ original x always preserved, this is the highway

// ^ what the sub-layer learned to add on top8. Positional Encoding: Teaching the Model Where Words Live

There is a subtle but critical problem with attention: it is position-agnostic by default. If you shuffle all the tokens in a sentence randomly, the raw attention computation produces identical scores. It only looks at which tokens match, not where they sit in the sequence. “The cat bit the dog” and “The dog bit the cat” would look identical to the model without positional encoding.

Write every word on a separate flashcard and throw them in a bag. Without positional encoding, the Transformer reaches in, sees all the cards at once, and has no idea which came first, second, or last. Positional encoding is writing a sequence number on each card before they go in. Now the model knows the order and can factor it into every decision it makes.

Context window limits (4k, 8k, 128k, 1M tokens) are directly tied to how far positional encoding can accurately represent positions. Techniques like RoPE and YaRN exist specifically to extend models beyond their original training context length. When a vendor advertises an “extended context” model, this is the underlying mechanism they improved.

9. Encoder vs Decoder: Why BERT, GPT, and T5 Are Fundamentally Different

The original Transformer had two parts: an encoder to read and understand input, and a decoder to generate output word by word. Modern models specialised into using just one of those parts, or both. This is not a minor implementation detail. It determines what the model can and cannot do.

An encoder is like a researcher who reads an entire document before forming an opinion. It sees every word at once (bidirectional). A decoder is like a writer drafting a sentence. It can only see what it has already written, never what comes next (causal, left-to-right). An encoder-decoder is a translator who reads the full source sentence first, then dictates the translation one word at a time.

| Architecture | Examples | Attention | Best For | Trained With |

|---|---|---|---|---|

| Encoder-only | BERT, RoBERTa, BGE | Bidirectional, sees all tokens | Embeddings, classification, RAG retrieval | Masked Language Model |

| Decoder-only | GPT-4, Claude, Llama, Gemini | Causal, past tokens only | Generation, chat, reasoning, code | Next token prediction |

| Encoder-Decoder | T5, BART, mT5 | Both plus cross-attention | Translation, summarisation, T2T | Sequence-to-sequence |

If you are building a RAG system, your embedding model (BGE, text-embedding-3) is encoder-only. Your generation model (Claude, GPT-4) is decoder-only. They use completely different internal attention patterns, which is exactly why you need two separate model calls. One reads everything at once to build a representation. The other generates left-to-right, one token at a time.

The Transformer Architecture: Mental Model in One View

Every time an LLM processes your prompt, this pipeline runs once per layer, repeated 32 to 128 or more times:

| Concept | What It Does | Why You Care |

|---|---|---|

| Self-Attention | Every token attends to every other simultaneously | Context placement in prompts matters |

| Q / K / V | Ask, Match, Receive: a learned search inside each token | The model figures out relevance itself |

| Attention Score | Q·K, scale, softmax, weighted blend of V | How importance becomes a number |

| Multi-Head | H heads run in parallel, each learning different relationships | Handles multi-part instructions well |

| FFN | Per-token knowledge lookup after attention | Where facts are stored; bigger model = more facts |

| Layer Norm | Rescales activations to a stable range each layer | Why training does not collapse |

| Residual | Skip connections carry the original signal forward | How 96-layer networks train without exploding |

| Positional Enc. | Injects token order since attention ignores position | Why context window limits exist |

| Enc. vs Dec. | Bidirectional understanding vs causal generation | Why RAG needs two separate model calls |

You do not need to implement any of this. But the next time you are debugging a prompt, designing a RAG pipeline, or choosing between an embedding model and a generation model, you will know exactly which part of the Transformer to look at.

If you want to go deeper, the original Attention Is All You Need paper is surprisingly readable once you have this foundation. The Hugging Face Transformers docs are also a good next step for seeing how these concepts map to real model code. If you are building on top of LLMs, my post on LLM tokenization covers how text becomes tokens before it ever reaches the transformer architecture.

Related

Prabhat Kashyap

Senior Technical Architect @ HCL Tech · working with Leonteq Security AG10+ years building distributed systems and fintech platforms. I write about the things I actually debug at work — the messy, non-obvious parts that don't make it into official docs.